Last August, former Tesla AI engineer Tim Zaman posted on X that a Tesla AI cluster, built using 10,000 of Nvidia’s H100 chips, was ready to go live.

Musk said a post on X in January that while a Dojo supercomputer cost $500 million to build, Tesla will spend more than that on Nvidia hardware in 2024. The table stakes for being competitive in AI are at least several billion dollars per year at this point.

Oracle CEO Larry Ellison, former Tesla board member and investor in X, said in December that xAI had secured Nvidia GPUs through Oracle to create the first version of Grok. XAI needed more than Oracle could provide.

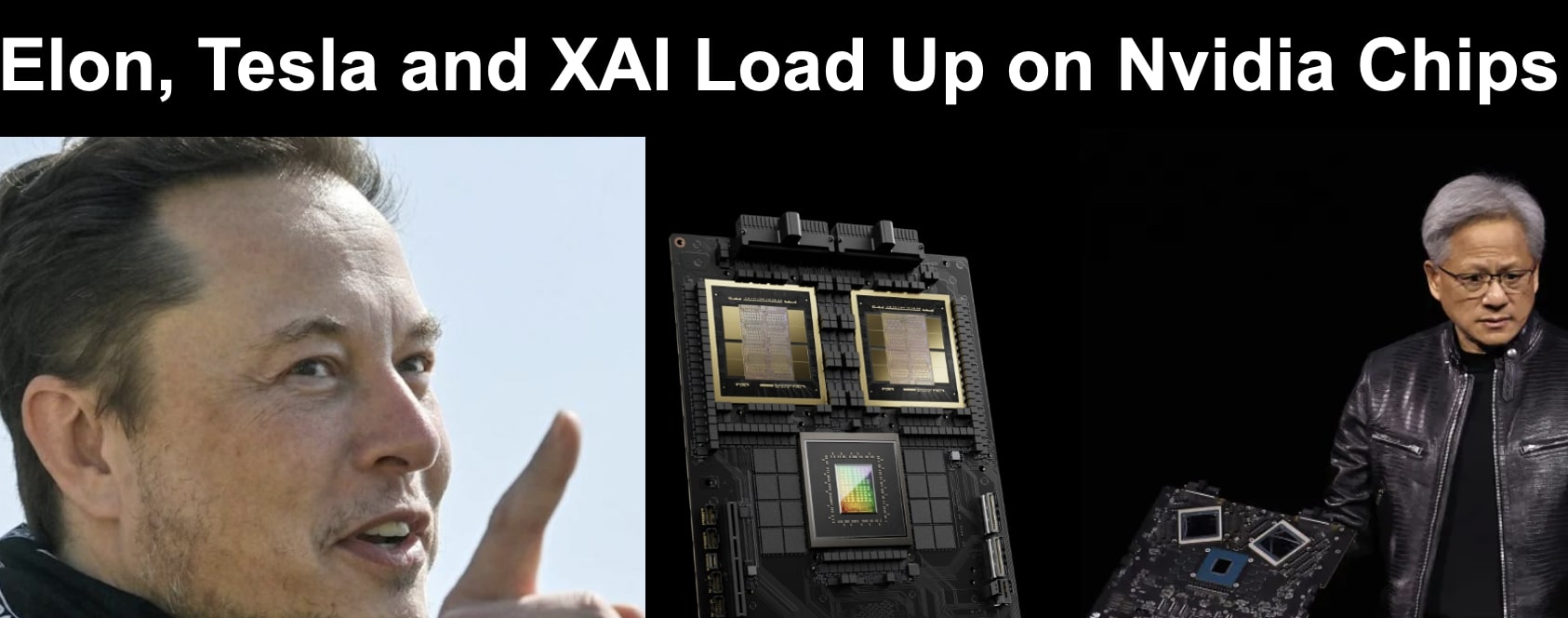

This week, Nvidia CEO Jensen Huang, launched the new Nvidia B200 chip. Tesla is listed as one of six major clients for the new chip.

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.