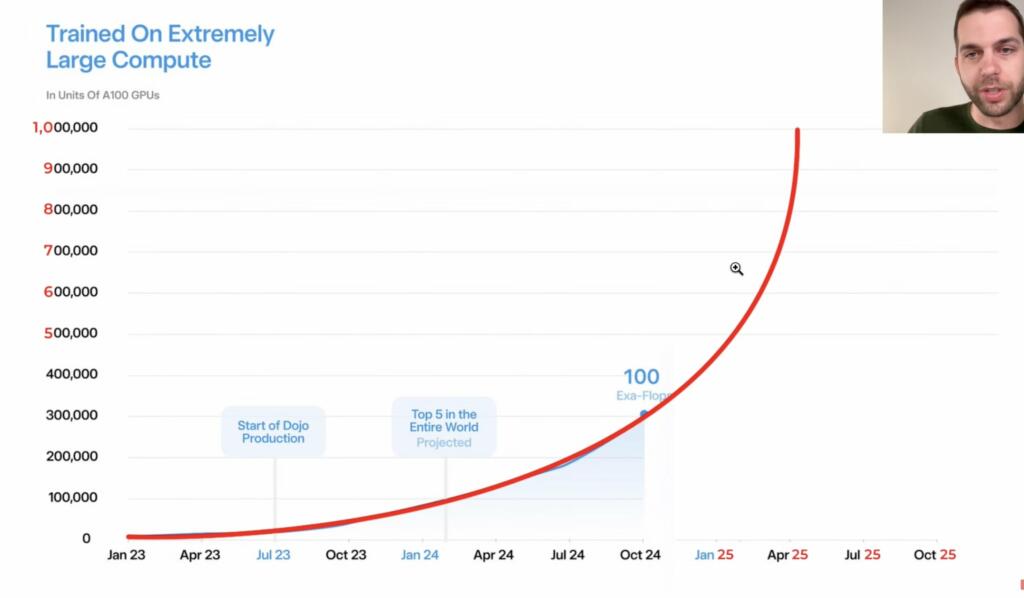

Tesla is starting production of the Dojo AI training Supercomputer next month. Tesla will have 13 Exaflops of AI training by the end of 2023 and 100 Exaflops by the end of 2024.

Jan 2023 3 Exaflops of AI compute, 10,000 Nvidia A100

June 2023 5.5 Exaflops, 17,000 Nvidia A100

Oct 2023 13 Exaflops, 40,000 Nvidia A100

Feb 2024 33 Exaflops, 100,000 Nvidia A100

October 2024 100 Exaflops

Mid 2025 300 Exaflops

And will be trained on enormous amounts of compute pic.twitter.com/BsmG9Vse6I

— Tesla AI (@Tesla_AI) June 21, 2023

Tesla is building the foundation models for autonomous robots pic.twitter.com/VUES9jU3ze

— Tesla AI (@Tesla_AI) June 21, 2023

These models will learn from a huge set of extremely diverse data from the Tesla fleet pic.twitter.com/nVaVDUPpCk

— Tesla AI (@Tesla_AI) June 21, 2023

AI supercomputers can use floating point 8 precision. This is different regular supercomputers running FP64.

Nvidia announced the DGX GH200 AI supercomputer at Computex in Taipei in May 2023. It uses 256 Grace-Hopper Superchips, connected by 36 NVLink Switches, to provide over 1 exaflops of FP8 AI performance (or nearly 9 petaflops of FP64 performance). The system further touts 144TB of unified memory, 900 GB/s of GPU-to-GPU bandwidth and 128 TB/s bisection bandwidth. Nvidia is readying the product for end-of-year availability, and notes its Grace Hopper Superchips have entered full production.

Nvidia is building a mega-system DGX GH200-based AI supercomputer called Helios. Helios connects four DGX GH200 systems – for a total of 1,024 Grace Hopper Superchips – using Nvidia’s Quantum-2 InfiniBand networking. Nvidia is planning to bring the system online by the end of the year. Helios will provide about 4 exaflops of AI performance (FP8), and while it’s not the intended use case, would deliver ~34.8 theoretical peak petaflops of traditional FP64 performance.

This is real: this is happening. This Train is going at max speed and cannot be stopped. (Pun intended).

For context, today, our compute clusters have 0.3% of idle time; and 84% of jobs are high priority. We want to be in a place where we have an excess of compute. https://t.co/7j1UbTYla8

— Tim Zaman (@tim_zaman) June 21, 2023

I should clarify that Dojo V1 is highly optimized for vast amounts of video training vs general purpose AI. Dojo V2 will address these limitations.

— Elon Musk (@elonmusk) June 21, 2023

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.