Meta AI is available online for free.

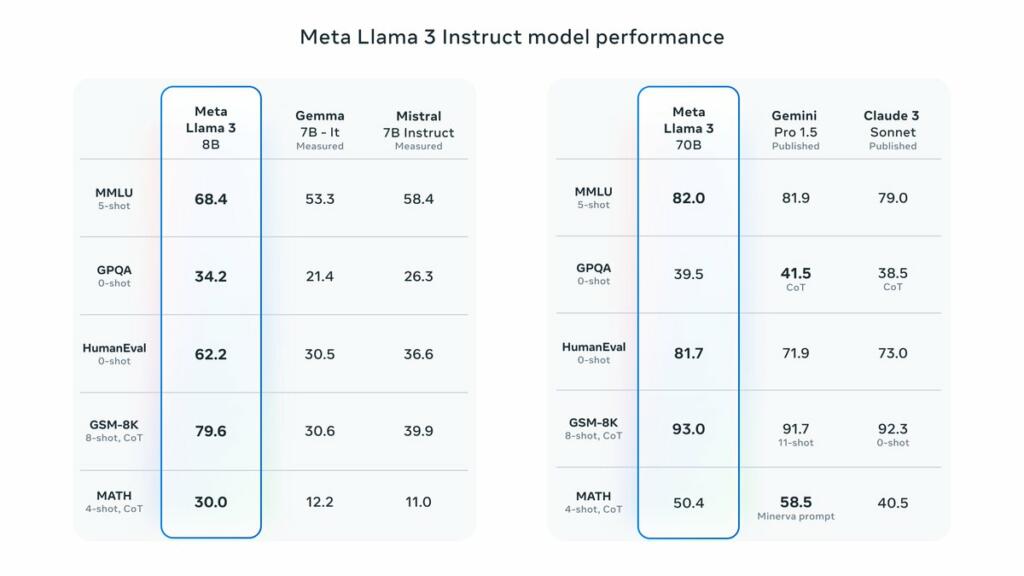

The small 7B model beats Mistral 7B and Gemma 7B.

The 70B beats Claude 3 Sonnet (closed source Anthropic model) and competes against Gemini Pro 1.5 (closed source model from Google).

Meta will be coming out with a larger model and is developing multi-modal.

The HumanEval is the metric for code generation. They have leading capabilities for it.

Key highlights

• 8B and 70B parameter openly available pre-trained and fine-tuned models.

• Trained on more than 15T tokens, 7x+ larger than Llama 2’s dataset!

• Improved tokenizer with vocabulary of 128K tokens for better performance.

• State-of-the-art performance across industry benchmarks.

• New capabilities, including enhanced reasoning and coding.

• 3x more efficient training than Llama 2.

• New trust and safety tools with Llama Guard 2, Code Shield, and CyberSec Eval 2.

• Integrated into Meta AI, and available in more countries across our apps.

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.

I wish there was a test for truthfulness. That is, I want something that will guarantee against false LLM statements, citations, and conclusions. For example, before final output of any citation, do what a human reviewer should do, and check if the citation and paper actually exist on the internet. Sometimes they don’t and this most basic of failures can be dangerous or even fatal to human beings.

It’s hallucinations that will pop the AI bubble if it isn’t addressed this year.

It’s staggering how quickly the AI race is expanding.

Thanks for breaking this down for us, Brian.