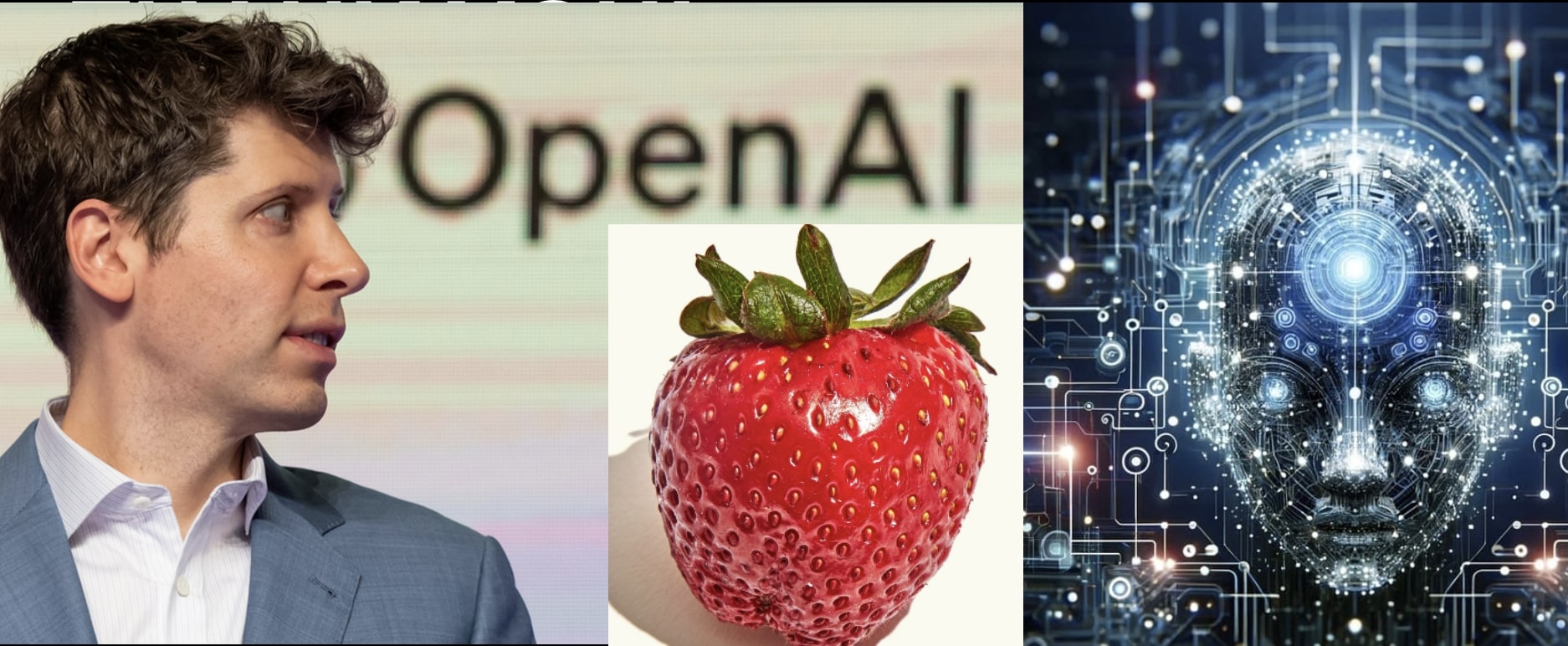

OpenAI’s rumored project Strawberry is focused on enhancing the reasoning capabilities of its AI models. Strawberry is rumored to be based upon the QStar AI advance. QStar was a reasoning advance that supposedly triggered the power struggle inside OpenAI and temporary firing of CEO Sam Altman.

Strawberry supposedly enables AI to perform long-horizon tasks (LHT), which require a model to plan ahead and execute a series of actions over an extended period of time. This technology aims to improve AI’s ability to understand and interact with the world, allowing it to autonomously search the internet and conduct deep research. By improving how AI models think and plan, OpenAI hopes to unlock new levels of intelligence and bring AI closer to human-level intelligence or beyond.

ChatGPT maker OpenAI is working on a novel approach to its artificial intelligence models in a project code-named “Strawberry,” according to a person familiar with the matter and internal documentation reviewed by Reuters. The project, details of which have not been previously reported, comes as the Microsoft-backed startup races to show that the types of models it offers are capable of delivering advanced reasoning capabilities.

How Strawberry works is a tightly kept secret even within OpenAI, the person said. The document describes a project that uses Strawberry models with the aim of enabling the company’s AI to not just generate answers to queries but to plan ahead enough to navigate the internet autonomously and reliably to perform what OpenAI terms “deep research,” according to the source.

Ilya Sutskever was the Chief Scientist at OpenAI, but he has left and formed his own company, Safe SuperIntelligence. Ilya knows about QStar and Strawberry. It would tough to keep this breakthrough secret. Others in the community are pursuing reasoning breakthroughs.

Two sources described viewing earlier this year what OpenAI staffers told them were Q* demos, capable of answering tricky science and math questions out of reach of today’s commercially-available models.

Reasoning is key to AI achieving human or super-human-level intelligence.

Strawberry includes a specialized way of what is known as post-training OpenAI’s generative AI models, or adapting the base models to hone their performance in specific ways after they have already been “trained” on reams of generalized data.

The post-training phase of developing a model involves methods like fine-tuning, a process used on nearly all language models today that comes in many flavors, such as having humans give feedback to the model based on its responses and feeding it examples of good and bad answers.

Strawberry has similarities to a method developed at Stanford in 2022 called “Self-Taught Reasoner” or “STaR”, one of the sources with knowledge of the matter said. STaR enables AI models to “bootstrap” themselves into higher intelligence levels via iteratively creating their own training data, and in theory could be used to get language models to transcend human-level intelligence, one of its creators, Stanford professor Noah Goodman, told Reuters.

Quiet-STaR: Language Models Can Teach Themselves to Think Before Speaking

Quiet-STaR is a method that helps language models (LMs) to improve their predictions by teaching them to generate rationales, or internal thoughts, for each piece of text they produce. This method builds on an earlier system called STaR, which helped LMs learn by using rationales in a question-answering context. Quiet-STaR addresses three main challenges: the high computational cost of generating text, teaching the LM how to produce and use internal thoughts, and predicting beyond just the next word. The solution includes a new sampling algorithm that operates token by token, special tokens to mark the start and end of a thought, and an improved training technique. As a result, the model better predicts difficult parts of the text and improves its performance on complex reasoning tasks without needing task-specific training. This suggests Quiet-STaR is a significant advancement toward more general and scalable reasoning in language models. The quote from Kierkegaard at the end underlines the idea that understanding comes from reflection, just as Quiet-STaR allows an LM to “understand” text by reflecting on its internal rationale.

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.

So Q* would be kind of level 3 ai, autonomous agentic, but internal. claude is grounded at level 2 until it improved error rate to be fit for level 3. Of cause openai/microsoft leader ship is to greedy for power to wait.

Q* Will only work if attention is continuously high for exeptional low error rate, this

Is where chatgpt fails badly independent of compute. Q* did not work for chatgpt, so they

Rebranded to strawberry. But Q* might work for claude, if they improve it.