Nvidia has increased compute power faster than Moore’s law for the past 8 years. Nvidia plans to launch new chips every 6 months for several years. The pace of their innovation is increasing.

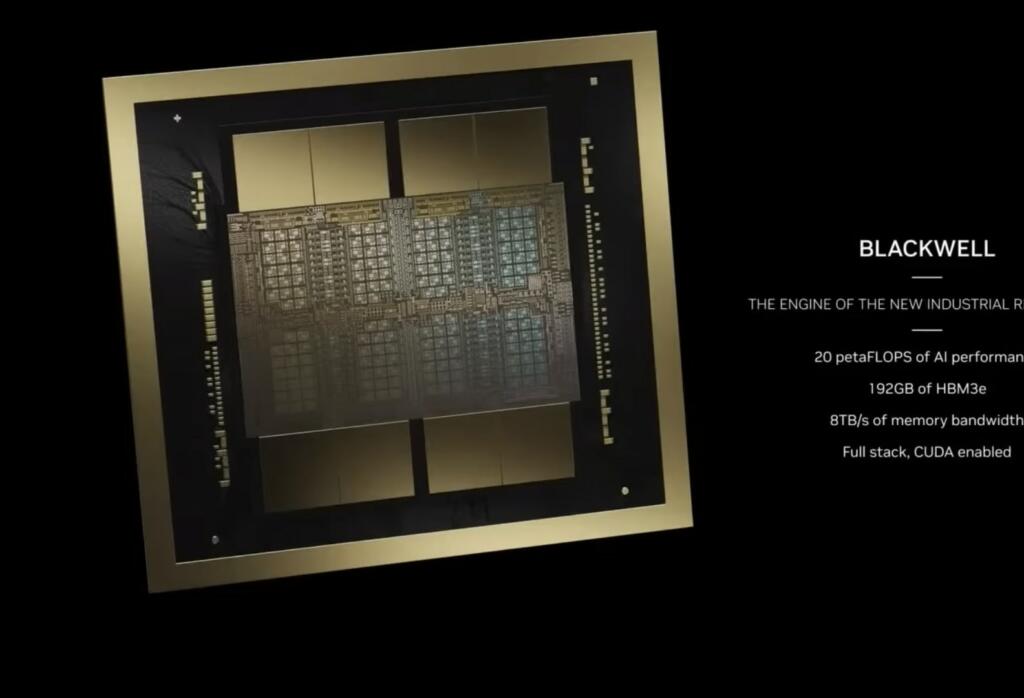

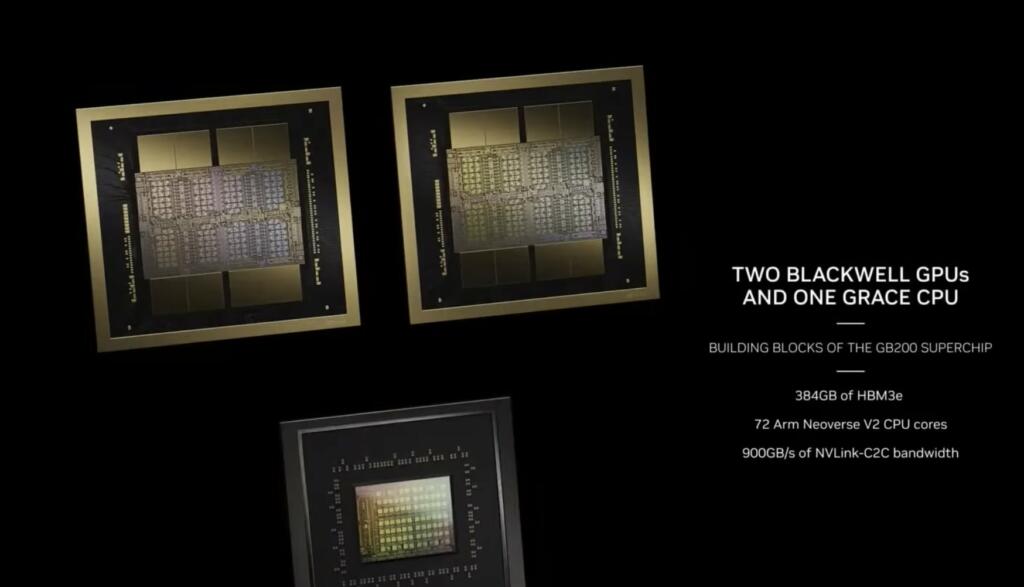

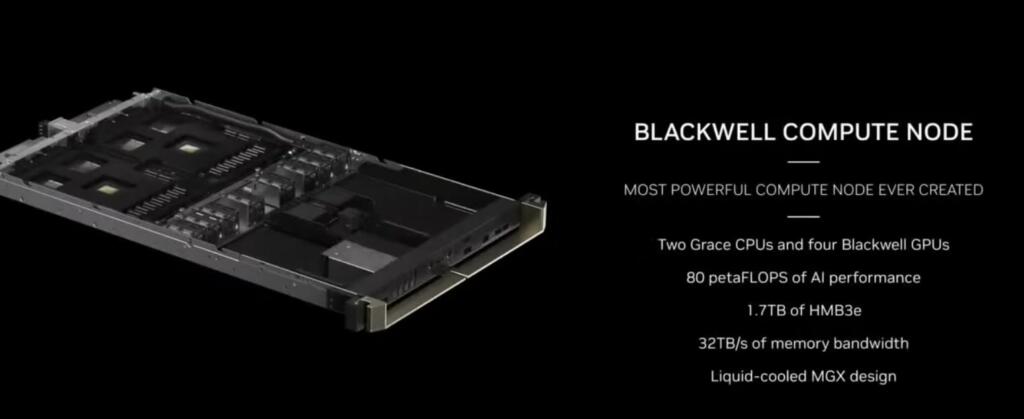

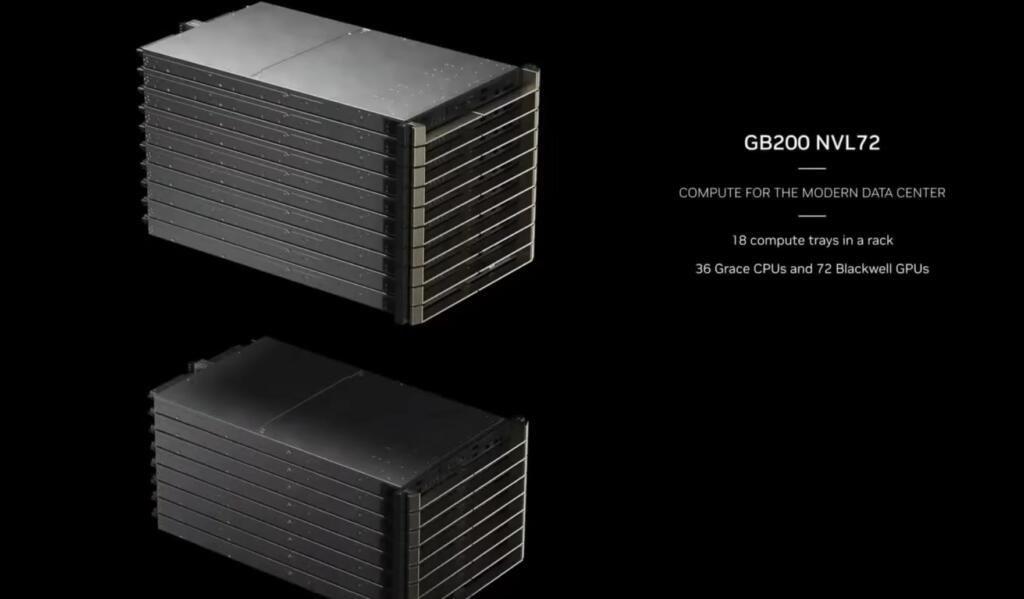

The Nvidia Blackwell GPU is not just a chip it is an entire system weighing 1.5 tons.

A 32000 GPU data center with Nvidia Blackwells would have 642 exaflops of compute. One rack will have 1.4 exaflops.

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.

1000x in 8 years – I don’t think.

Comparing FP16 to FP4 is like comparing a V8 to a V4 engine – the comparison in unfair.

If we re-calibrate all to FP8 and assume FP4=1/2FP8 etc & normalize to 2016 then: i.e. a 263 x equivalent compute power

2016 FP8 score = 1

2017 FP8 score = 6.8

2020 FP8 score = 32.6

2022 FP8 score = 105.3

2024 FP8 score = 263.2

All good and such, but Nvidia WAS primarily a video card company.

Is all this new development going to allow 100% ray traced games soon?

Which must be why Intel just stopped selling XPoint/Optane, kind of like the way they unloaded their power-efficient ARM precursor right before the phone market took off.

How much money did they spend on stock buybacks and how much did they just get from the CHIPS act? Oh, yeah. $8.5 billion.

I agree it’s partially valid, to do inference at fp4, but part of the progress was the two cuts from 16->8 then 8->4 bits. The Pascal to Volta numbers are the largest jump, but obscured in the exaggerated geometric progression.

That said, the march towards more and more compute is still mind-blowing.

Youtuber “Coreteks” did a video the other day entitled “The FUTURE of GPUs: PCM”. In the video he discussed AMD and/or NVIDIA adopting Phase Change Memory (PCM) as a stackable (low heat) memory for GPU’s which could give them a huge increase (hundreds of fold) in processing power.

A human brain is estimated to be about 10-100Pflops. Once AI neural architecture is figured out these ‘superchips’ are likely each capable of human level intelligence. And in a few years they will cost just a few $1000. Easily cheap enough to be in every home, car and household robot.

Can it mow the grass, cook dinner, wash the car, change the oil, clean house?

today, no. But A humanoid robot will be able to do all of that in a few years.

You do know we already have robomow and robovac.