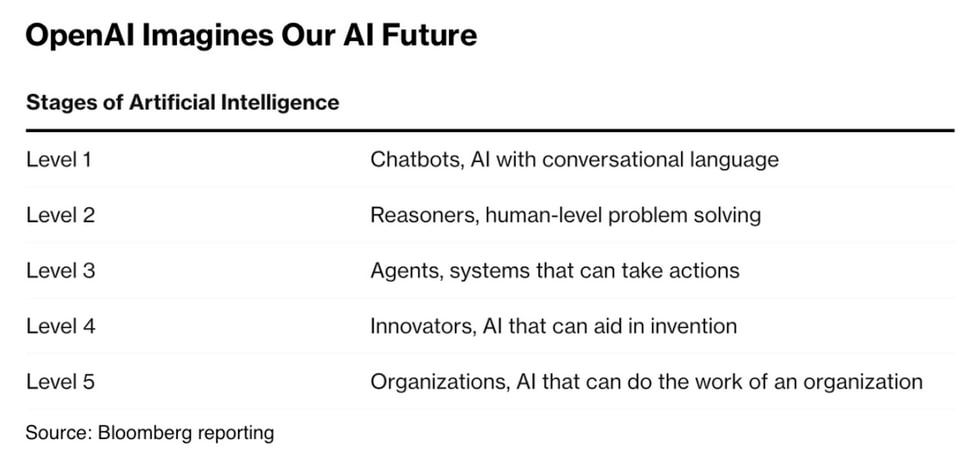

OpenAI reportedly internally introduced a new five-tier system to track its progress toward AGI. The classification system ranges from Level 1 (current conversational AI) to Level 5 (AI capable of running entire organizations)

OpenAI reportedly internally introduced a new five-tier system to track its progress toward AGI.

The classification system ranges from Level 1 (current conversational AI) to Level 5 (AI capable of running entire organizations). pic.twitter.com/VceYR1Q1k3

— Rowan Cheung (@rowancheung) July 12, 2024

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.

This document invalid the hypothesis of reaching AGI in 2025 and superintelligence in 2027.

GTP-5 will be at level 2 or 3 at best, and it will be out in 2026. GPT-6 will be at level 4 maybe 5 and will be out in 2029. AGI will happen when all jobs are taken, meaning AI running an organization. Superintelligence would be like running a whole civilization.

Once they get to level 2, I feel like level 3 and maybe 4 fall almost instantly.

The biggest problem with agents based on current LLMs is that they don’t do well at reasoning. Fix that, and we’ll quickly have very capable agents.

And a lot of innovation (level 4) isn’t magic – it’s more about reasoning about what the fundamental problem is to be solved, maybe looking for good analogies and reasoning about them to see if there’s a solution from one domain that can inspire a solution in another.

Who’s to say, AI itself will not create a cascade self reenforcing effect? It goes from A1 to A5 because it just assumes it should? Lack of an external actor, that looks at an AI actions, without any consequence to itself, or honest broker, evaluating AI actions from outside, concerns me. Yeah I know I ask more questions about things I understand less. How else can I learn? (yes I know, I need to get out and party more. Know what? I honestly believe that…)

Well honestly they are at level 1 right now. I had asked how a lunar colony would be powered and the response was “wind and solar power”.

I asked GPT-4o that, and got solar, nuclear, and fuel cells. All very much realistic…

But yes, they have said that we are at 1. Although in some areas, I’d think more 1.5.