Mark Zuckerberg plans on acquiring 350,000 Nvidia H100 GPUs to help Meta build a next-generation AI that possesses human-like intelligence.

Zuckerberg mentioned the figure today as he announced his company’s long-term effort to develop an artificial general intelligence (AGI), or an AI that can learn and be used to perform a variety of tasks.

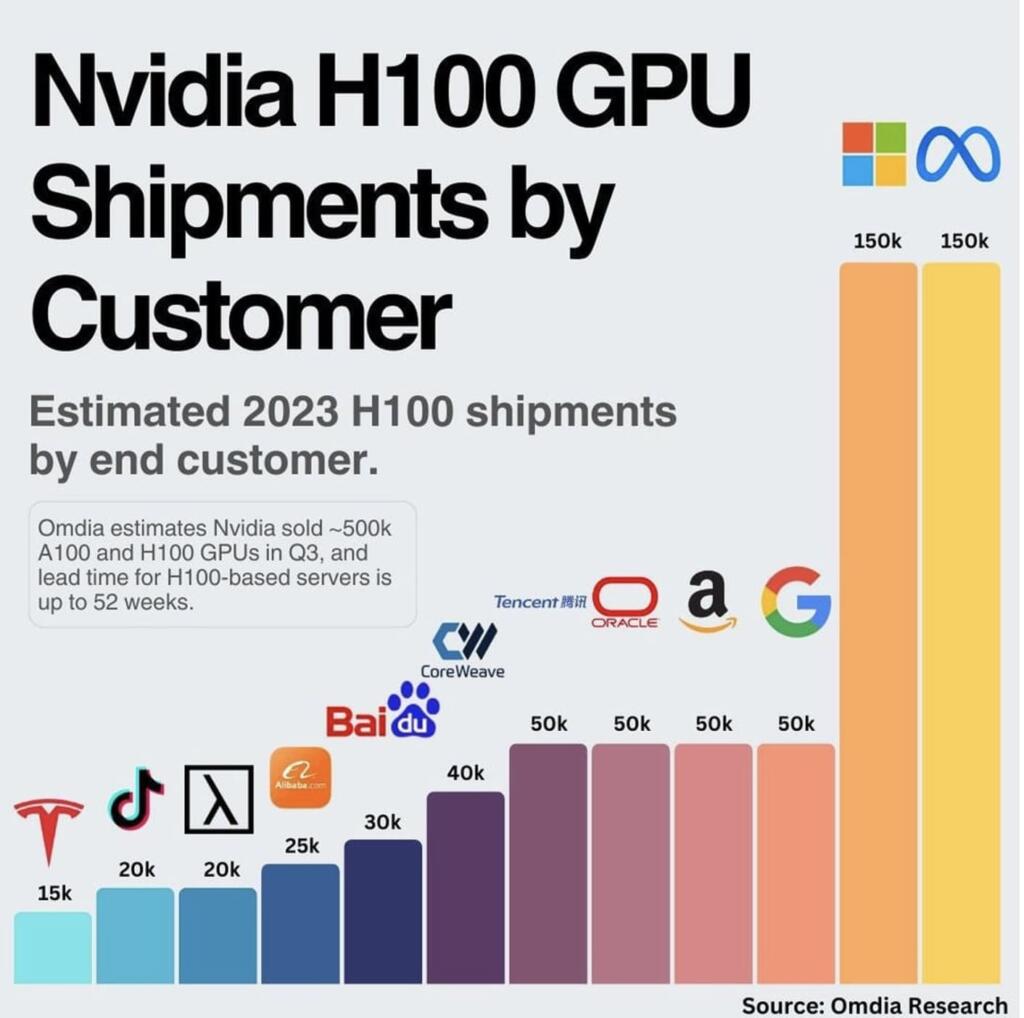

By the end of 2024, Meta will have around 350,000 Nvidia H100s. Meta will have 600,000 H100 equivalents of compute if you include other GPUs (perhaps from AMD and other sources).

Nvidia H100s have up to 3-8 Petaflops of FP8 AI performance. 600,000 H100 equivalents would be 2.1 to 4.8 zettaflops.

This will be the equivalent of 6.6 million Nvidia A100 chips. This could be about 2000 Exaflops (2 Zettaflop) of AI compute at end of 2024.

Tesla indicated that 300,000 Nvidia A100s were equal to 100 Exaflops. Nvidia H100 are 11 times more powerful than A100s. Tesla has a goal of 300,000 A100s with 100 Exaflops. This would be about 30,000 H100s.

This goes to the Nextbigfuture statement that Tesla and others will have to increase AI compute spending. Targets for AI compute will need to increase.

Brian Wang is a Futurist Thought Leader and a popular Science blogger with 1 million readers per month. His blog Nextbigfuture.com is ranked #1 Science News Blog. It covers many disruptive technology and trends including Space, Robotics, Artificial Intelligence, Medicine, Anti-aging Biotechnology, and Nanotechnology.

Known for identifying cutting edge technologies, he is currently a Co-Founder of a startup and fundraiser for high potential early-stage companies. He is the Head of Research for Allocations for deep technology investments and an Angel Investor at Space Angels.

A frequent speaker at corporations, he has been a TEDx speaker, a Singularity University speaker and guest at numerous interviews for radio and podcasts. He is open to public speaking and advising engagements.

600k h100s? Wonder if the grid can even handle it.

600,000 times about 300-400 watts each. 170-240 MWs.